👋 Hi, I’m Andre and welcome to my newsletter Data Driven VC which is all about becoming a better investor with data and AI.

Join hundreds of funds and participate in the 2026 DDVC Landscape survey to see which AI tools other firms use, how much they pay for data & engineering teams, and more + win 3x €100 Amazon voucher + 3x €1500 The Lab Premium membership

ICYMI, check out some of our most read episodes:

Brought to you by Affinity - The AI Backbone for Data Driven VCs

Your firm already runs Claude, Gemini, or Copilot. Now connect them directly to your deal intelligence.

On April 23rd, Affinity walks through its hosted MCP server and AI chat beta — giving investment teams conversational CRM access, automatic meeting briefs, and a self-serve data layer that works with every AI tool in your stack.

No engineering required.

Welcome to another Data Driven VC “Insights” episode where we cover the most interesting research and reports about startups, GPs, LPs, AI & automation.

How Much Revenue Do You Need to Raise a Seed vs Series A vs Series B?

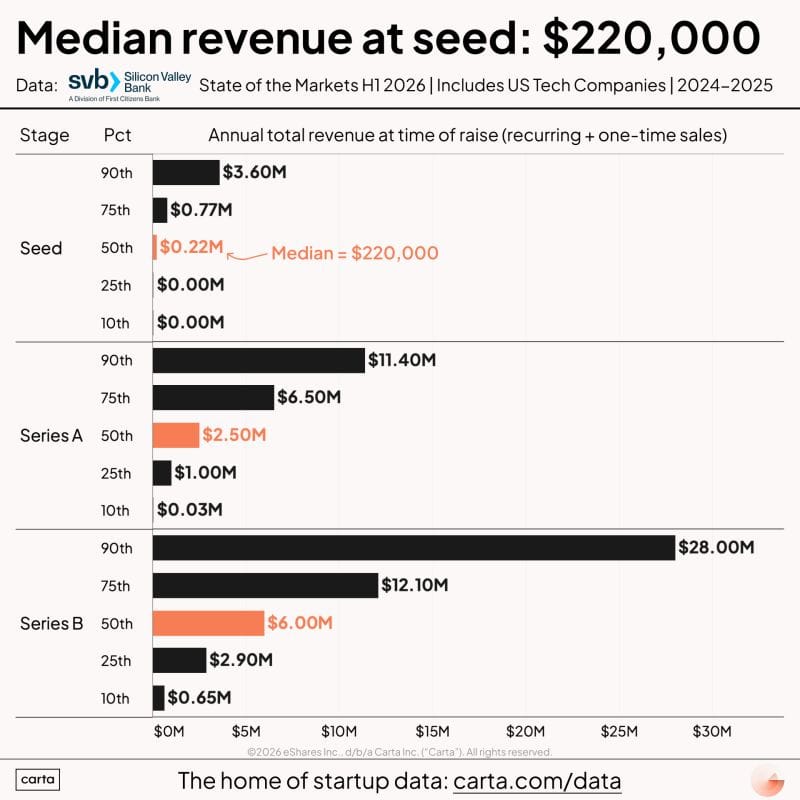

Peter Walker (Head of Insights at Carta) shared fundraising revenue benchmarks from SVB's State of Markets Report, H1 2026, showing wildly different revenue levels at time of raise across every stage.

Seed round revenue ranges from $0 to $3.6M, with a solid percentage of rounds going to companies with marginal or no revenue: The data confirms that pre-revenue seed rounds are alive and well, almost certainly driven by connected AI founders who can raise on team and thesis alone. But the variance is enormous, and comparison across companies at the same "stage" is increasingly meaningless.

The jump from median seed revenue to top-quartile Series A revenue is massive, and the "point of acceleration" keeps moving earlier for AI-native companies: Companies that raise seed with near-zero revenue face an increasingly steep climb to meet Series A expectations. AI-native startups that hit revenue traction early are pulling away from the pack, making it harder for slower-growth companies to catch up round over round.

The methodology covers US tech companies only, which may include pre-revenue biotech and other industries where revenue is not expected at seed, potentially pulling medians down: Walker notes this caveat is important for interpreting the data. Investors should be careful benchmarking their own portfolio against aggregate figures without adjusting for sector mix.

✈️ KEY TAKEAWAYS

Revenue benchmarks by round are useful as calibration, not targets. The real signal is variance: the best seed companies today are either pre-revenue with extraordinary teams or already at $2M+ ARR. The middle is getting squeezed. For seed investors, the bar is bifurcating into conviction bets on people and traction bets on numbers, with very little room in between.

Seed Valuations Are Exploding

Peter Walker also shared Carta data showing top 5% seed valuations have hit $115M, a historically expensive level driven by the AI hype cycle.

Top 5% seed valuations reached $115M in Q4 2025, a level that is historically unprecedented and clearly decoupled from the rest of the market: As Bryce Roberts of Indie VC noted, the VC world is heading somewhere many experienced investors cannot relate to. Massive funds are playing at the earliest stages, wild valuations are being signed in hours rather than weeks, and secondaries are flying off the shelves.

The AI hype cycle is real and measurable even if the underlying technology is transformational: Walker makes an important distinction: AI can absolutely transform the world and still be a frenzied hype cycle where most investments do not result in returns. Both things can be true simultaneously. The data shows a clear bifurcation between the top 5% (AI-driven outliers) and the remaining 95% of seed rounds.

The valuation compression from top 5% seed ($115M) to median seed creates a misleading picture of the overall market: Investors looking at "average" seed valuations are seeing a blended number that masks two completely different realities. The top end is moving faster than ever while the broad market remains disciplined.

✈️ KEY TAKEAWAYS

For early-stage VCs, the strategic question is whether to compete at the top end or focus on the remaining 95% where valuations are rational and entry prices still support venture-scale returns. The data suggests that most of the alpha in the current cycle will come from either being in the top 5% deals early (hard, expensive) or from finding overlooked opportunities in the broad middle that the hype cycle is ignoring.

Join 1549+ investors in our free Slack group as we automate our VC job end-to-end with AI. Live experiment. Full transparency.

The Universities Behind America's Biggest Startup Exits

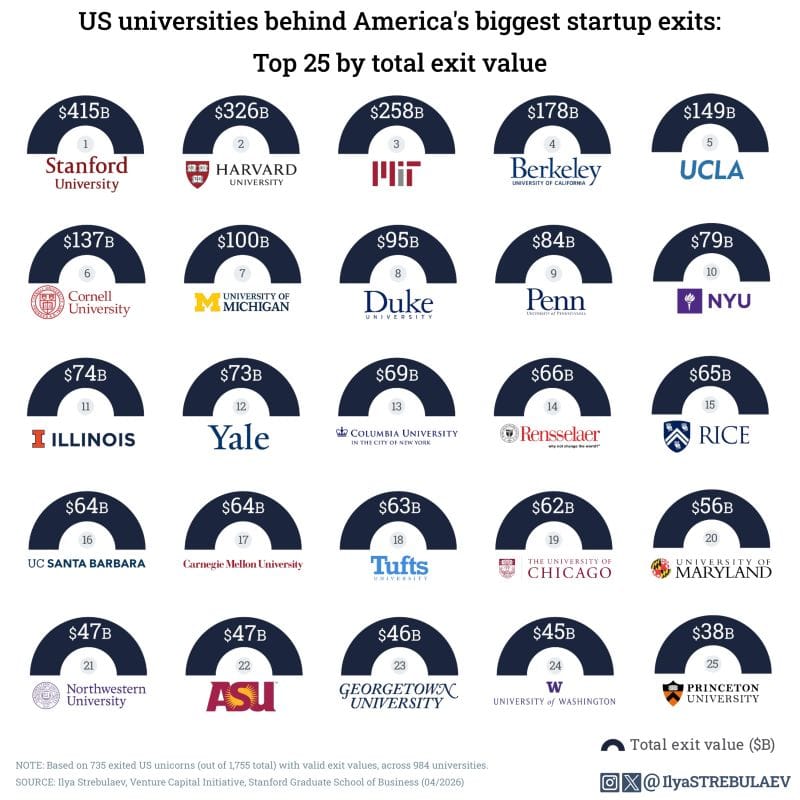

Ilya VC shared a ranking of universities by total founder exit value, covering the combined value of IPOs and acquisitions from graduates of each institution.

Stanford founders have generated $415B in total exit value, with Harvard and MIT following, but several non-elite schools punch well above their brand weight: Rensselaer Polytechnic Institute (#14, $66B) sits ahead of Yale (#12, $73B). University of Illinois Urbana-Champaign ($74B) outperforms both Columbia and Yale. Rice University ($65B) is in the same tier as several Ivy League schools.

Public universities hold their own across the top 25: Berkeley (#4), UCLA (#5), Michigan (#7), Illinois (#11), UC Santa Barbara (#16), Maryland (#20), ASU (#22), and UW (#24) all make the list: The data challenges the assumption that venture-backed startup success is concentrated exclusively in elite private institutions. State schools produce a significant share of billion-dollar founders.

The Ivy League is more spread out than expected, with Princeton at #25 ($38B) and Cornell at #6, while NYU comes in at #10 with $79B: The gap between Harvard (#2) and Princeton (#25) within the same league is enormous. NYU's strong showing likely reflects its proximity to the NYC startup ecosystem and strong business/engineering programs.

✈️ KEY TAKEAWAYS

For VCs building sourcing strategies, this data is a direct input to campus and community engagement. The concentration at Stanford is expected, but the strength of public universities and non-Ivy schools suggests that firms over-indexing on a handful of elite networks are systematically missing founder talent. The best origination strategies go where the data points, not where the brand signals.

AI Computing Capacity Doubling Every 7 Months

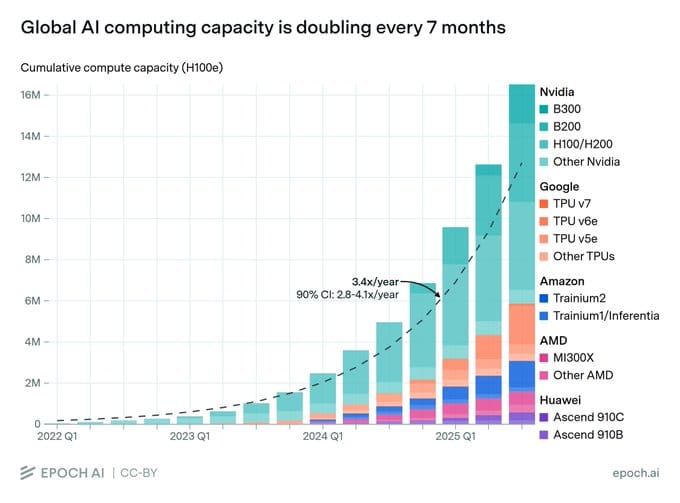

Apoorv Agrawal (Altimeter Capital) published an updated analysis of the Gen AI value chain, showing the ecosystem has grown 5x to ~$435B in annualized revenue but the economic structure remains deeply inverted.

The semiconductor layer still captures ~70% of all AI revenue ($300B) and ~79% of all gross profit, with NVIDIA alone representing ~80% of the semi layer at ~$250B annualized: The AI value chain is almost exactly the mirror image of the cloud stack, where apps capture 70% and semis capture 6%. At the current pace of profit share shift (~4 points per two years), it would take well over a decade for the app layer to reach cloud-like economics.

The application layer grew 12x in two years to ~$60B but is dominated by two companies: OpenAI and Anthropic together represent ~$40-50B and ~75% of the layer: A distant third tier includes coding AI players like Cursor, followed by fast-growing agent companies (ElevenLabs, Glean, Sierra, Perplexity, Replit, Lovable, Harvey, Abridge). Despite explosive percentage growth, the app layer added only ~$55B in absolute dollars vs. ~$225B for the semi layer.

Top 5 hyperscalers spent $443B in capex in 2025 (up 73% YoY) with $600B+ projected for 2026, roughly 75% directed at AI infrastructure: Every major hyperscaler is hedging with custom silicon. Google TPUs are now merchant hardware, forcing NVIDIA to cut pricing ~30% for some customers. Amazon's Trainium crossed $10B annual run rate growing triple digits. OpenAI signed a multiyear deal with Broadcom for 10GW of custom accelerators.

✈️ KEY TAKEAWAYS

The most important number in this analysis: semis capture 79% of gross profit in AI vs. 6% in the cloud stack. That gap defines the entire investment landscape. For VCs backing AI application companies, the race is to build businesses that can sustain 50%+ gross margins while the infrastructure layer commoditizes underneath them. Custom silicon is the key variable: if ASICs succeed at scale, NVIDIA's margins compress and profit shifts up the stack faster.

Upgrade your subscription to access our premium content & join the Data Driven VC community

How to Build a Personal Knowledge Base

Andrej Karpathy shared a detailed workflow for using LLMs to build personal knowledge bases, describing a system where the majority of his token throughput now goes into manipulating knowledge rather than code. The post received 18.2M views.

The workflow: index source documents into a raw/ directory, then use an LLM to incrementally "compile" a wiki of .md files with summaries, backlinks, concept articles, and cross-links: Karpathy uses Obsidian as the IDE, Cursor as the LLM interface, and various CLI tools for Q&A and enhancement. The key insight is that the LLM maintains the wiki, not the human. You rarely edit manually; the LLM reads, writes, and maintains the entire knowledge structure.

The system creates a compounding knowledge loop: queries and explorations get "filed" back into the wiki, so personal research always accumulates rather than evaporating in chat windows: Karpathy runs LLM "health checks" over the wiki to find inconsistent data, impute missing information via web search, and discover interesting connections for new article candidates. The wiki is treated as a living, self-improving artifact rather than a static document.

No fancy RAG needed at small scale. The LLM auto-maintains index files and brief summaries, reading all important related data fairly easily when the wiki stays under a certain size: Karpathy notes this approach works because the context window handles the retrieval problem at small scale. He predicts the approach extends to fine-tuning, where the LLM would "know" the data in its weights rather than just the context window.

✈️ KEY TAKEAWAYS

Karpathy is describing what every VC firm's internal knowledge system should look like: meeting notes, deal memos, portfolio updates, and thesis documents compiled into an LLM-maintained wiki that compounds over time. The shift from "manipulating code" to "manipulating knowledge" is the unlock. Firms that build this now will have a compounding information advantage that gets wider every quarter.

The Most Powerful AI Skill: Auto-Discovering What to Automate

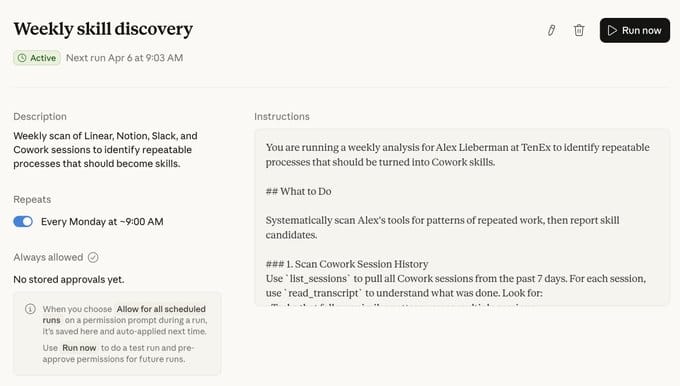

Alex Lieberman (co-founder Morning Brew) shared his most powerful Claude workflow: a weekly skill that automatically scans his tools to identify what should be automated next.

Every Monday at 9am, Cowork scans Linear, Notion, Slack, Gmail, and prior Cowork sessions to identify repeatable processes that should become new skills: Rather than manually deciding what to automate, the system observes workflow patterns across tools and proactively recommends automation candidates. The meta-skill (a skill that creates skills) removes the biggest bottleneck in AI adoption: knowing what to automate in the first place.

The approach allows anyone to "proactively become more AI-native without making it a full-time job": Lieberman frames this as the simplest but most impactful pattern: instead of spending hours auditing your own workflows, let the AI observe and recommend. The system compounds because each new skill creates more data for the meta-skill to analyze.

The post generated 207K views and 2.6K bookmarks, signaling massive demand for practical AI workflow patterns over theoretical frameworks: The engagement ratio (bookmarks to likes) suggests people are saving this for implementation, not just passive consumption. The pattern is tool-agnostic and applies to any knowledge work environment.

✈️ KEY TAKEAWAYS

The "skill that creates skills" pattern is the clearest example yet of compounding AI automation. For VC firms and portfolio companies alike, the first step is not building a complex AI stack but deploying a simple meta-process that continuously identifies automation opportunities. This is how AI adoption scales without dedicated headcount: the system teaches itself where to add value next.

That’s it for today!

Stay driven,

Andre

PS: Don’t forget to nominate the most innovative funds & thought leaders here for the Data Driven VC Landscape 2026