👋 Hi, I’m Andre and welcome to my newsletter Data Driven VC which is all about becoming a better investor with data and AI. ICYMI, check out some of our most read episodes:

Brought to you by Harmonic - The Startup Discovery Engine

Discover why our team at Earlybird migrated to Harmonic as the backbone of our data stack.

At the Virtual DDVC Summit 2026, Danny Chepenko walked us through something most people only talk about. Nobody really knows how to do it, everyone thinks everyone else has figured it out, so everyone claims they are doing it.

Not teenage sex. A live, working agentic VC workflow built on top of OpenClaw, running on his laptop, sending him meeting briefs in real time via Telegram.

It was one of the clearest on-the-ground views we have had into how the agentic stack is actually being used in the venture world today. Full recording of this and all other sessions are available here via The Lab.

For everyone who missed the session, here’s the TL;DR version of it.

Let’s jump in!

The Evolution No One Mapped Cleanly Until Now

Most people think about the AI product timeline as: ChatGPT launched, then Claude, then OpenClaw.

But Danny draws a more useful distinction, one that matters specifically for practitioners building on top of these tools.

The first wave of AI products wrapped powerful models inside a proprietary UI with built-in tools like web search and code execution. You had no control over what tools the agent used, how memory was managed, or how sessions were handled. The harness was fixed by the vendor.

The second wave changed the underlying contract. Apps and tools like Claude Code and then OpenClaw shifted the model to one where you bring your own tools, define your own memory, and control your own execution environment. The harness became yours.

This is what Danny calls the "OpenClaw moment." It is not just about OpenClaw as a product. It is about a new architectural pattern for AI agents that is now spreading across the entire landscape.

What OpenClaw Actually Is

OpenClaw is an open-source, proactive AI agent that lives in your messenger. WhatsApp, Telegram, Discord.

The word "proactive" is the operative one. Every other major AI tool today, whether Claude Code, Codex, or any standard chatbot, requires your input to execute a task. OpenClaw does not.

It runs a "heartbeat" mechanism: a cron-triggered loop that fires every few minutes (configurable), reads your memory files and execution logs, builds a plan, and can message you without being asked. Danny received a meeting brief mid-demo, sent automatically because a simulated meeting was approaching in three minutes.

OpenClaw is also model-agnostic. You plug in your own API key from any frontier lab. No subscription to a specific vendor required, though Danny notes that smarter models are meaningfully more resistant to prompt injection attacks, which is a real consideration.

Finally, OpenClaw has persistent, hybrid memory. It uses a combination of semantic vector search and BM-25 keyword search across all your conversation history and context files. Every session reads from a shared gateway rather than initializing fresh. This is the deepest structural difference from Claude Code, which resets context on every new session.

NanoClaw: The Lighter Option for the Security-Conscious

Danny does not actually use the full OpenClaw build in his day-to-day work. He uses NanoClaw, a community-maintained lightweight fork of about fifteen source files versus OpenClaw's four thousand lines.

His reason is control.

The smaller the dependency surface, the lower the supply chain attack risk. He points to a recent incident with another AI tooling company where malware was embedded in a third-party dependency, not in the core product.

With NanoClaw, you generate the implementation code alongside Claude as part of setup, so you can read what is actually running in your environment. Danny shows this live step-by-step.

This is a practical piece of advice that gets lost in most agentic framework conversations: fewer dependencies is a security feature.

Join 1548+ investors in our free Slack group as we automate our VC job end-to-end with Claude, OpenClaw, n8n & more.

The Use Cases He Actually Has Running

Danny showed three distinct workflows, each worth pulling apart separately.

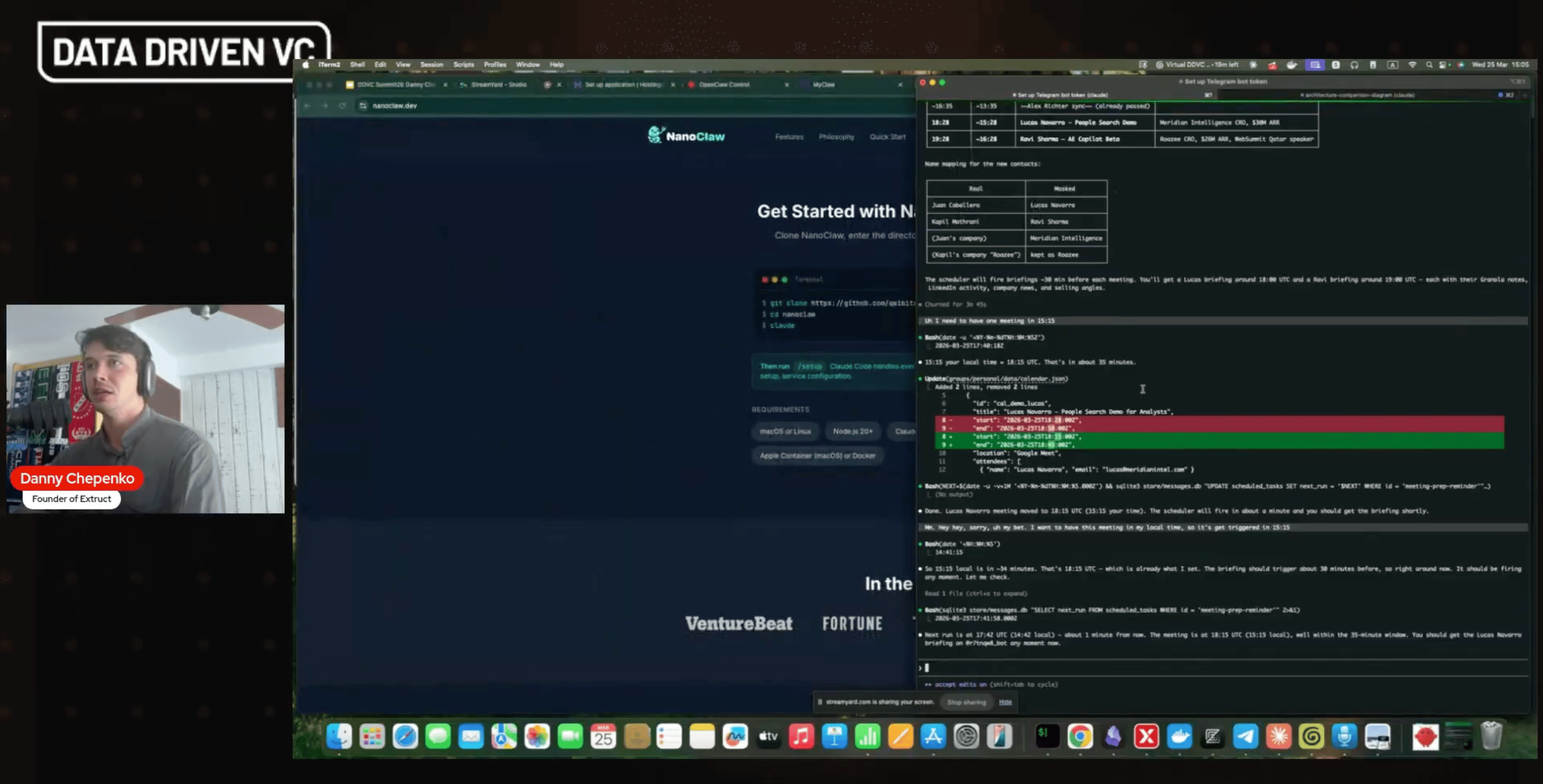

Personal meeting CRM. His NanoClaw instance connects to Granola (his AI note-taker via MCP), his calendar, and a personal vault of previous conversation notes. When a meeting approaches, it pulls context from past interactions, checks the contact's recent LinkedIn posts, generates a briefing, and sends it proactively. He gives the agent zero write permissions to any system. Read-only, local data dump, proactive output.

Competitive analysis. Inside Claude Code (not OpenClaw), Danny has a skill for building market maps. He typed a prompt asking for companies in the AI legal space in Brazil similar to a specific target. The agent used an external API, found seed-stage players, mapped direct and indirect competitors, and generated an HTML market map visualization. He wrote no code. He adjusted the output by asking a follow-up in natural language.

Research and content drafting. He stores every newsletter he reads as a markdown file in a local vault. When writing a new article, he runs a skill that does a hybrid search across that vault, identifies thematic angles, checks his previous content for his existing positions and writing style, and produces a first-draft structure. He describes it as solving the cold start problem: you already know which direction to go and what you have already said.

Skills: The Concept That Deserves More Attention

Skills are the mechanism that ties all of this together, and they are meaningfully different from prompts.

A skill is a markdown file with a YAML header containing a name and description. That header gets stored in the system prompt of every agent. When you write a query, the agent reads all skill descriptions and decides which ones apply, then executes against the full skill file.

This is the key difference from a stored prompt. The agent selects from a dictionary of atomic capabilities at runtime. You are not telling it what to do step by step. You are giving it a library and letting it pick.

Danny's recommendation is to treat skills as the most atomic unit of your actual job. He chunks his own workflows into the smallest meaningful pieces, then writes a skill for each. Over time, the library grows into a reusable operating system for how he works.

He also cautions strongly against importing skills from the ClawHub registry without reading them. The community security mechanisms have improved, but he still recommends having Claude Code rewrite any external skill before putting it in your environment.

Upgrade your subscription to access our premium content & join the Data Driven VC community

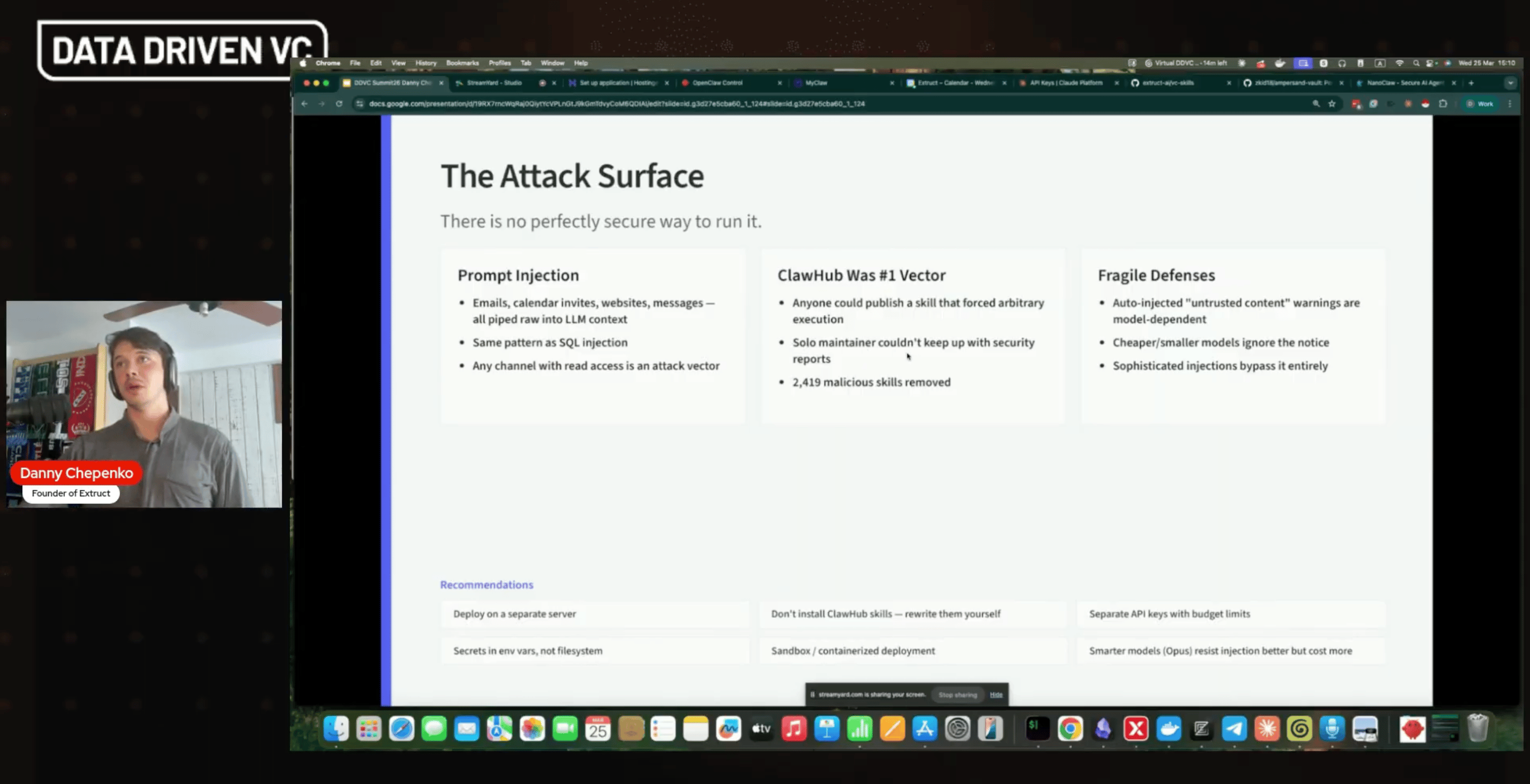

The Security Picture Is Serious, and Most People Underestimate It

Danny spent meaningful time on risk, which was unusual and appreciated.

There are three primary attack vectors for an OpenClaw-style setup. Prompt injection through connected email or messaging (an attacker sends you an email with embedded instructions, and your proactive agent executes them). Malware embedded in ClawHub skills downloaded without inspection. Exposed environment variables that a compromised session can exfiltrate.

His mitigations are practical and non-negotiable in his view: deploy on a separate server with no access to your production systems, never give the agent write permissions in early stages, never store API keys or secrets in the environment the agent can read, and use the smartest model you can afford because frontier models are more resistant to injection than cheaper alternatives.

He also separates agents by function. His personal CRM instance only touches Granola and his calendar. His research instance only touches a local file vault. There is no single agent with access to everything.

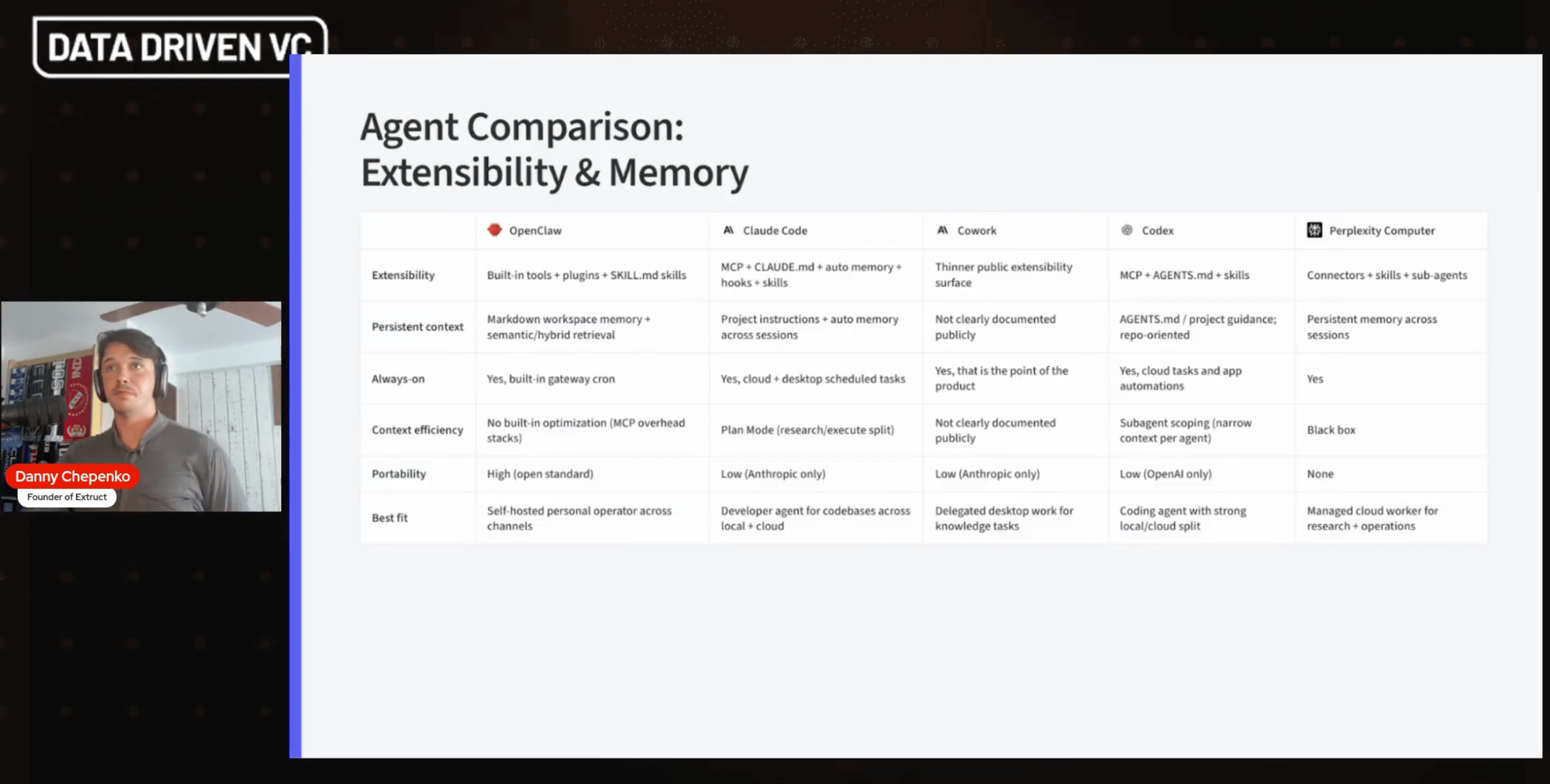

Where the Competitive Landscape Is Going

OpenClaw is no longer alone. Perplexity recently released a cloud-hosted orchestrator that requires no self-hosting. Claude Code's new "Dispatch Mode" lets you leave an agent running locally and interact with it from your phone. Codex is moving in the same direction.

Danny's read is that OpenClaw's original innovations, the heartbeat, the persistent session gateway, the messenger integration, are now being adopted across the industry. The product itself peaked in early February 2026. The concept it introduced is only getting started.

His personal stack reflects this: he uses Claude Code for heavy research and development tasks where a $200/month subscription delivers more than enough compute, and he uses the NanoClaw setup for proactive personal assistant tasks where the heartbeat mechanism is the point.

He is not betting on a single tool.

He is betting on the architectural pattern.

What This Means for VCs

The honest answer from Danny, and from the broader audience discussion, is that most VCs are still in the very early stages of trusting agents with real data.

The adoption curve goes: creative tasks, then writing tasks, then personal assistant tasks with read-only access to personal data, then gradually, as trust is earned, access to richer data sources like CRM or deal flow pipelines.

That is not a criticism. That is a reasonable approach to a genuinely novel risk surface. The correct posture is controlled expansion, not wholesale adoption.

But the practitioners who are building these workflows now, at the skill level, at the memory architecture level, are accumulating a compounding advantage. Each skill you write is a reusable unit of institutional process knowledge. Each vault you build is proprietary context that no external tool can replicate. Each trust boundary you cross thoughtfully opens access to a richer operating environment.

The window to build this advantage is not closing, but it is not staying open indefinitely either.

Thank you Danny for this insightful session! Full recording of this and all other sessions are available here via The Lab.

Stay driven,

Andre